You’re on a deadline. The podcast outline is half done, the newsletter needs to go out, and that blog post you promised yourself you’d write “the old-fashioned way” is still blinking at you from a blank doc.

So you open ChatGPT and ask for a draft.

Then the panic hits.

Is using ChatGPT plagiarism? If you paste the output into your article, are you crossing a line? If you use it for research, summaries, or rewrites, are you still safe? And if you’re building a content business, not just posting for fun, how much risk are you taking with your reputation?

That tension is everywhere right now. A 2024 BestColleges survey found that 51% of students consider AI use on schoolwork to be plagiarism, yet 43% have used it anyway. That’s not a minor disagreement. That’s a giant fog bank sitting over the road.

Creators feel it too. Not because they’re trying to cheat, but because they’re trying to move faster without getting sloppy. They want help with brainstorming, scripting, outlining, editing, and repurposing. They don’t want to accidentally publish something that sounds original but isn’t.

The good news is that this question has a better answer than a simple yes or no.

Using ChatGPT can be plagiarism, but it isn’t automatically plagiarism. The primary issue is whether you’re misrepresenting authorship, reusing uncredited material, or publishing unverified output as if it were fully your own original work.

That means context matters. Process matters. Disclosure matters. And your workflow matters a lot more than many realize.

The AI Elephant in the Room Is Using ChatGPT Plagiarism

A lot of creators are asking the wrong version of the question.

They ask, “Can I use ChatGPT?” when the better question is, “How am I using it, and what am I claiming about the result?”

If you’re a podcaster turning interviews into blog posts, a YouTuber repackaging scripts into email sequences, or a publisher mining a deep archive for fresh angles, AI feels like a superpower. It can help you start faster, sort ideas, summarize themes, and punch through the blank-page problem.

But AI also creates a strange kind of uncertainty.

Why people get stuck

Many were taught a simple plagiarism rule in school: don’t copy someone else’s words and pretend they’re yours. Easy enough.

ChatGPT scrambles that rule because it doesn’t hand you a neat citation trail. It gives you polished language that looks new. That’s why people freeze. The output feels original, but they can’t always tell where the language, structure, or ideas came from.

Practical rule: If you can’t explain how the draft was created, verified, and edited, you’re not ready to publish it.

For creators, the stakes aren’t just academic. They’re commercial.

If you publish AI-heavy work without checking it, you risk more than embarrassment. You can damage audience trust, create editorial problems, and muddy ownership questions across your content library.

The better framing

Think of ChatGPT like a talented intern who works at lightning speed and never sleeps, but sometimes hands you material with no receipts.

That doesn’t make the intern evil. It means you’re still the editor.

A responsible creator doesn’t ask AI to replace judgment. They use it to accelerate parts of the process that don’t depend on blind trust.

Here’s the short version:

- Using AI for brainstorming is usually low risk.

- Using AI for final wording without review is risky.

- Using AI to paraphrase source material without attribution can become plagiarism fast.

- Using AI transparently, with human review and source checks, is a defensible professional practice.

The confusion is real, but it’s manageable. Once you stop treating “AI use” as one giant category, the ethical picture gets much clearer.

What Plagiarism Really Means in 2026

Plagiarism isn’t really about the tool. It’s about intellectual honesty.

A simple way to consider it: if content creation is cooking, plagiarism isn’t “using a blender.” Plagiarism is serving someone else’s recipe, flavor profile, and plating as if you invented the dish.

What plagiarism covers

People often reduce plagiarism to copy-paste theft. That’s one form of it, but it’s not the whole menu.

Plagiarism can include:

- Direct copying of words without attribution

- Close paraphrasing that changes the wording but keeps the original structure or idea pattern

- Uncredited idea use when a specific insight or framework came from someone else

- Self-plagiarism when you reuse your own past work without disclosure where disclosure is expected

For creators, the danger zone often isn’t blatant theft. It’s the fuzzy middle. You ask AI to “rewrite this better,” and what comes back is cleaner, but still too close to the original source.

Tool use versus idea theft

Using a tool isn’t the same as stealing.

A spellchecker doesn’t plagiarize for you. A transcription app doesn’t plagiarize for you. An outlining assistant doesn’t plagiarize for you. ChatGPT also isn’t automatically plagiarism just because it helped.

The issue is whether you used the tool in a way that obscures authorship or source origins.

Here’s a clean distinction:

| Situation | Likely ethical status |

|---|---|

| You use ChatGPT to brainstorm headlines | Usually fine |

| You use ChatGPT to summarize your own transcript, then verify it | Usually fine |

| You paste an article into ChatGPT and publish its rewrite without attribution | Likely plagiarism |

| You ask ChatGPT for facts and publish them without checking sources | Risky and often irresponsible |

Why creators should care beyond school rules

Even outside school, plagiarism touches ownership, licensing, and attribution. If you work with writers, editors, contractors, brands, or publishers, it helps to understand the broader logic behind intellectual property protection. You don’t need to become a lawyer. You do need to know that originality claims have consequences.

And if your research process is shaky, your content gets shaky too. That’s why it helps to understand what counts as a reliable reference in the first place. This guide on credible sources is a useful checkpoint before you let AI summarize anything.

A plain-language test

Ask yourself three questions before publishing:

- Did this language or idea come from someone specific?

- Have I credited that source where the context requires it?

- Would my audience, editor, or instructor feel misled if they saw my exact process?

If your process looks fine only when hidden, it probably isn’t fine.

That’s the heart of it. Plagiarism rules exist because readers, editors, clients, and institutions need an honest signal about where work came from and who did it.

How AI Tools Can Inadvertently Plagiarize

ChatGPT doesn’t sit there plotting to steal paragraphs. The risk comes from how large language models generate text.

They predict likely word sequences based on patterns learned from huge training datasets. That means the system can produce language that feels fresh while still echoing familiar source material, especially in topics where explanations tend to become standardized.

Why some subjects are riskier than others

Technical writing is especially vulnerable.

A Copyleaks analysis found that nearly 60% of ChatGPT outputs contained some form of plagiarism, with the highest rates in technical subjects like Physics. In those areas, the model often reproduces formulaic explanations that resemble existing training material.

That makes sense when you think about it. There are only so many common ways to explain a physics concept, legal principle, or accounting method in short form. AI tends to drift toward those high-probability patterns.

Creative fields often allow more variation, so the model has more room to generate distinct phrasing. Technical domains often narrow the lane.

The sneaky version of plagiarism

The most confusing form isn’t obvious copying. It’s semantic plagiarism.

That’s when the wording changes, but the underlying structure, sequence of ideas, or borrowed substance stays too close to the source. It can also show up as patchwriting, where bits of source logic get lightly remodeled instead of transformed.

Here’s what that looks like in practice:

- You feed AI a competitor’s article and ask for a rewrite.

- It returns a cleaner version with different wording.

- The structure, examples, and takeaway order still mirror the original.

- You publish it as “new.”

That’s not a fresh article. That’s a costume change.

AI is good at remixing patterns. It is not naturally loyal to attribution.

Why typical users miss the problem

Most creators judge originality by feel.

If a paragraph doesn’t look copied, they assume it’s safe. But AI output can inherit source fingerprints without producing obvious duplication. That’s why “it sounds different” isn’t a reliable standard.

This is also why understanding the basics of natural language processing helps. Once you know these systems predict patterns rather than reason like human researchers, the plagiarism risk becomes easier to spot.

A simple mental model

Think of AI like a collage machine with a very smooth paintbrush.

It doesn’t usually hand you visible cut-and-paste scraps. It blends. That blend can still contain too much of the original picture.

Use AI when you need speed, ideation, or first-pass language. Don’t assume its polish equals originality. That’s the mistake that trips up smart people.

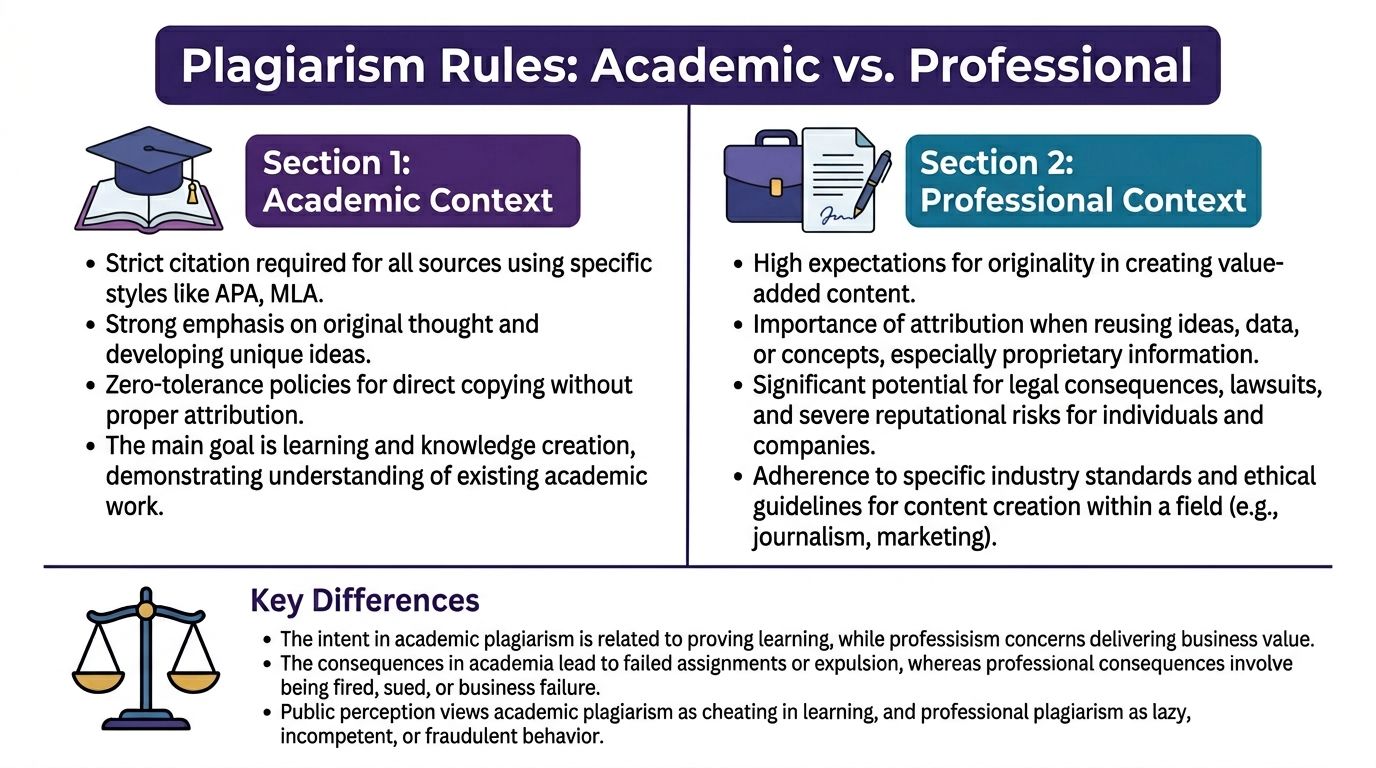

The Plagiarism Rules for Academic vs Professional Contexts

A lot of confusion comes from treating all plagiarism rules as if they work the same everywhere. They don’t.

A university seminar, a reported magazine feature, a ghostwritten founder post, and a YouTube script all carry different expectations. The core principle stays the same. Don’t misrepresent authorship or source use. But the enforcement and consequences vary a lot.

Academic context

In academia, the standard is usually strict because the work is supposed to reflect your learning, reasoning, and original engagement with sources.

A 2024 university study found that frequent ChatGPT use correlated with plagiarism, but it explained only 3.9% of the behavior, while a cheating culture among peers was a much stronger predictor. That matters because it shows the tool itself isn’t the whole story. Environment, norms, and motivation shape misuse.

Still, the classroom rule is simple: if the assignment expects your own thinking, undisclosed AI assistance can become an integrity issue quickly.

Common academic expectations include:

- Citation and disclosure: If the institution or instructor requires disclosure, you need to disclose.

- Original analysis: You can’t outsource your reasoning and still claim full authorship.

- Source accountability: If AI gives you facts, quotes, or summaries, you’re responsible for checking them.

For students preparing for high-stakes coursework, policy clarity matters. Resources like Magna AI for students taking AP exams can be useful because they focus on how learners should approach AI in structured educational settings.

Journalistic and editorial context

Journalism has its own version of strictness.

Readers expect reported facts, attributable quotes, and transparent sourcing. If a reporter uses AI to shape copy, the biggest question isn’t “Was AI involved?” It’s “Can every factual claim be defended and traced?”

That means problems show up when AI is used to:

- fabricate source material

- smooth over missing reporting

- paraphrase existing coverage too closely

- create authority without verification

A newsroom may allow AI for headline options, transcript cleanup, or draft organization. It won’t usually tolerate AI inventing facts or laundering someone else’s reporting through a chatbot.

Professional and creative context

Many creators operate in this realm. Brand content, newsletters, scripts, course materials, product marketing, podcast notes, ebooks, and repurposed media assets.

The rules are looser than school, but the trust test is still real.

Here’s a practical comparison:

| Context | What matters most | Main risk |

|---|---|---|

| Academic | Proof of your own learning | Integrity penalties |

| Journalism | Verifiable sourcing and accuracy | Credibility loss |

| Marketing and creative | Originality, brand trust, rights clarity | Reputational and legal trouble |

In business content, audiences usually don’t demand footnotes for every sentence. They do expect that your claims are true, your examples aren’t stolen, and your “insights” aren’t just recycled competitor copy with a fresh intro.

The rule that travels across all contexts

The simplest cross-industry rule is this:

If AI helped you generate language, research, or structure, your responsibility went up, not down.

That responsibility looks different depending on the setting. A professor may require formal disclosure. A client may expect an originality warranty. A podcast audience may expect you not to fake expertise.

The safest approach is to match your AI use to the norms of the environment you’re publishing in. Don’t apply school rules blindly to marketing. Don’t apply casual creator norms to academic work. And don’t assume “everyone does it” will protect you when something goes sideways.

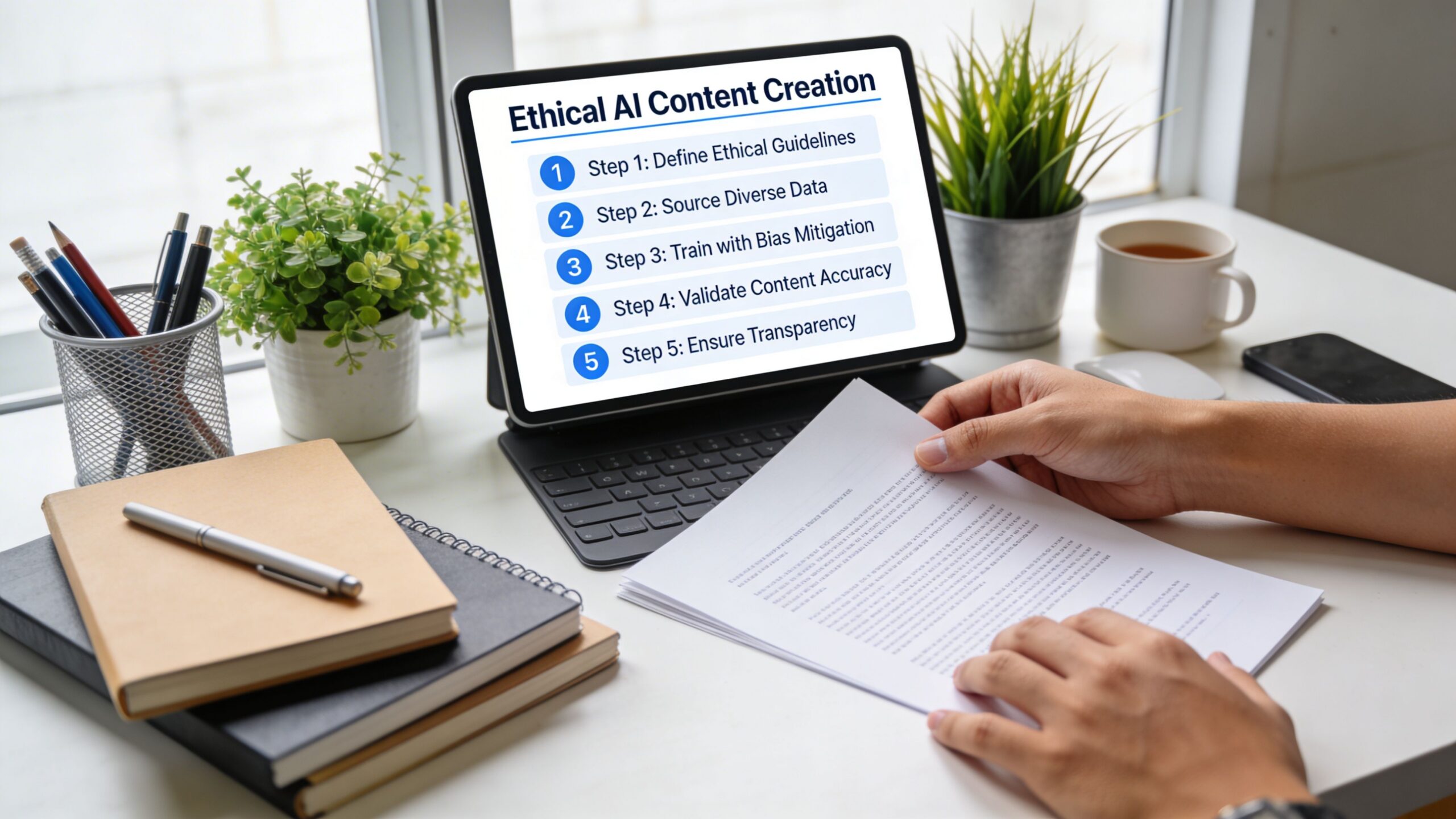

Your Workflow for Ethical AI-Assisted Content Creation

Most AI plagiarism problems don’t start with bad intentions. They start with a sloppy workflow.

If you use ChatGPT like a vending machine for finished copy, risk goes up fast. If you use it inside a documented editorial process, risk drops and quality usually improves.

Stage one, ideation

This is the safest place to use AI.

Ask for angle variations, title options, audience questions, objection lists, episode spin-offs, or content repurposing ideas. You’re using the tool as a brainstorming partner, not as a ghostwriter with a fake ID.

Good prompts at this stage include:

- Give me five audience questions a podcast listener might ask after this episode.

- Turn this webinar topic into blog, video, email, and LinkedIn angles.

- What counterarguments would a skeptical editor raise?

Low risk. High value.

Stage two, research

This stage is where many creators slip.

Use AI to help organize research, not to replace it. Let it suggest topics to investigate, summarize your own notes, or compare themes across material you already trust. Don’t let it become your unverified fact supplier.

A key problem here is detection confusion. Traditional plagiarism checkers often fail to flag AI content, while AI-specific detectors analyze perplexity and burstiness, with Winston AI cited at 99.98% accuracy in the Honorlock discussion. That tells you two things. First, copying risk and AI-use detection are not the same issue. Second, passing a plagiarism checker does not prove your draft is clean.

Use this mini-checklist before moving on:

- Verify claims: Check every factual statement against original sources.

- Mark uncertain material: Highlight anything AI generated that you haven’t confirmed.

- Save inputs: Keep the source notes, transcript excerpts, or article links you used.

Stage three, drafting

Discipline is essential at this stage.

You can ask AI for a rough draft, but treat that draft like clay, not marble. Pull structure from it if it helps. Then rewrite in your own voice, insert your own examples, and remove generic filler.

A safer pattern looks like this:

- Write your thesis or point of view first.

- Feed AI your own notes, transcript, or outline.

- Ask for structure help, not final authority.

- Replace weak passages with original language and lived examples.

Here’s a useful gut check.

If you’d feel awkward showing the raw AI output next to your final draft, you probably haven’t transformed it enough.

This short walkthrough is worth a watch if you want a visual reset on responsible AI use in content workflows:

Stage four, editing and proof

Final review is where you catch what speed created.

Read the draft aloud. Compare it to any source material that informed it. Run checks for duplicate phrasing if you relied heavily on external references. Confirm that examples, quotes, and statistics came from real, traceable places.

A practical editorial table helps:

| Workflow stage | Safe use | Risky use |

|---|---|---|

| Ideation | Brainstorm topics and formats | Asking for a complete publish-ready article |

| Research | Organizing trusted notes | Accepting facts without source checks |

| Drafting | Building from your own outline | Publishing AI wording with light edits |

| Editing | Checking clarity and gaps | Assuming tools catch every problem |

The best AI workflow is boring in the right places. It leaves a trail. It separates ideation from verification. And it treats authorship like something you prove through process, not something you assume because the doc has your name on it.

How to Properly Cite and Disclose AI Use

Citing AI is awkward because the rules are still patchy.

University library guidance notes that there are no universally established rules for citing AI, and the common recommendation is to treat it like personal communication, which doesn’t fit many creative and professional workflows well (USF Library guide).

That gray zone makes people think disclosure is optional. It isn’t always legally or institutionally required, but it’s often the smartest move.

When to disclose

You don’t need a confession booth every time AI helps you brainstorm a headline.

You should strongly consider disclosure when AI materially shaped:

- the wording of a published draft

- the research summary you relied on

- the translation or paraphrase of source material

- the structure of a client deliverable or academic submission

If you’re unsure, disclose lightly and plainly.

Copy-and-paste templates

Here are practical starting points.

For a blog post or newsletter

AI disclosure: AI tools assisted with brainstorming and early drafting for this piece. All claims were reviewed, edited, and finalized by the author.

For a YouTube description or podcast show notes

This episode description was developed with AI assistance for outline support and editing. Final wording, fact checks, and publishing decisions were completed by our team.

For internal marketing documentation

Draft developed with AI-assisted outlining and language suggestions. Human review completed for accuracy, originality, tone, and source alignment.

For academic contexts using personal communication style guidance

ChatGPT assisted with ideation or language support on [date]. Output was treated as a tool-generated aid and not as an authoritative source.

If you need help building clean attribution practices into your publishing process, this guide on how to add citation is a useful practical resource.

Why disclosure helps your brand

Disclosure doesn’t weaken authority when it’s done well. It signals that you have standards.

Audiences don’t expect creators to reject modern tools. They expect creators to use them responsibly. A simple note can show that you didn’t outsource judgment, skip verification, or blur the line between assistance and authorship.

Transparency turns AI from a secret shortcut into an accountable production tool.

That is the ultimate goal. Not performative purity. Clear process.

Your Toughest AI Plagiarism Questions Answered

If I only use ChatGPT for ideas, is that plagiarism

Usually no.

Idea generation alone is generally the lowest-risk use case, especially when the final structure, wording, examples, and judgment are yours. Trouble starts when “idea help” becomes draft dependence.

If I rewrite the AI output heavily, is it mine

Maybe, but don’t treat editing as magic.

Heavy revision can make a piece original. Light polishing usually doesn’t. If the AI output determined the structure, examples, logic flow, and most of the phrasing, your role may have been editor rather than author.

Can plagiarism tools reliably catch AI-generated copying

Not perfectly.

Traditional plagiarism tools and AI detectors solve different problems. One looks for textual overlap. The other looks for machine-like writing patterns. Neither replaces human review, and neither can tell you whether your use of AI was ethically disclosed.

Is using ChatGPT plagiarism in client work

It depends on the agreement and the context.

If a client expects fully original human-written work and you secretly use AI to generate substantial portions, that can create trust and contract problems even if nobody uses the word “plagiarism.” If the client allows AI-assisted workflows, the key is quality, originality, and clarity about process.

What about repurposing my own past content with AI

That’s often fine, but it can still get messy.

If you reuse your own material across platforms, make sure you’re adapting it, not just repackaging it lazily. For publishers and teams, it helps to track where ideas, quotes, and source passages originated so you don’t accidentally duplicate language or recycle old assets in ways that confuse audiences.

If ChatGPT gives me facts, do I need to cite ChatGPT or the original source

Cite the original source whenever possible.

ChatGPT is not a dependable endpoint for factual claims. Treat it like a helper that points you toward material to verify, not like a scholar you can quote with confidence.

How do I document an ethical AI process

Keep a simple paper trail.

That can include:

- prompt notes

- source documents

- transcript links

- version history

- disclosure language

- editorial review notes

You don’t need a courtroom exhibit. You need enough documentation to show how a draft moved from AI assistance to accountable human authorship.

What’s the safest overall rule

Use AI for speed. Keep humans responsible for truth, originality, and credit.

That rule works in classrooms, newsrooms, studios, marketing teams, and creator businesses.

If you’re building a serious content operation, the challenge isn’t just making more content. It’s creating a process you can trust as you scale. Contesimal helps teams organize research, surface value from their content libraries, and support human-AI collaboration with more clarity and control, so your archive becomes a growth asset instead of a messy folder graveyard.