You spent two weeks on the episode outline. You booked the guest people in your niche recognize. You clipped trailers, wrote the email, queued the social posts, hit publish, and waited for the lift that seemed obvious while you were making it.

Instead, the numbers flatlined.

That kind of miss stings more when the work was good. Not lazy-good. Thoughtful, carefully produced, team-hours-good. Most creators respond the same way at first. They move on fast, tell themselves the algorithm was weird, and put all their energy into the next thing.

That’s exactly how teams repeat the same mistake with better lighting.

When Great Content Ideas Don't Land

A bad launch day creates a very specific kind of fog. The editor thinks the hook was off. The host thinks the guest missed the audience. The marketer blames timing. The social lead says the clips were too broad. Everyone has a plausible explanation, and none of them line up.

That’s where a post mortem document template earns its keep. Not as a punishment. Not as a formal way to say, “Who blew it?” It’s a method for freezing the facts before memory gets selective and opinions get louder than evidence.

Teams often first encounter post-mortems through software or operations writing. The problem is that those templates are built around outages, alerts, and recovery workflows. They’re useful for engineers, but they often break down for podcasters, bloggers, YouTubers, and publishers trying to diagnose a dead-on-arrival article or a video with a brutal retention drop. Research summarized by incident.io’s incident post-mortem template hub notes that existing post-mortem templates overwhelmingly focus on technical incidents, leaving a major gap for content creators, and that 68% of content marketers cite metrics like audience drop-off or SEO underperformance as barriers to effective iteration.

Why content teams need a different lens

A content failure rarely has one clean cause.

Sometimes the piece was strong, but the packaging was wrong. Sometimes the packaging worked, but the audience targeting missed. Sometimes the content itself wasn’t the problem at all. The production schedule slipped, approvals got rushed, the email went late, and nobody noticed the episode title contradicted the thumbnail promise.

Practical rule: If your review ends with “the algorithm changed,” you didn’t finish the review.

A good content post-mortem keeps creative work creative, but makes the analysis disciplined. It treats weak performance as information. That shift matters. The teams that improve fastest aren’t the ones with the fewest misses. They’re the ones that document misses well enough to stop paying tuition twice.

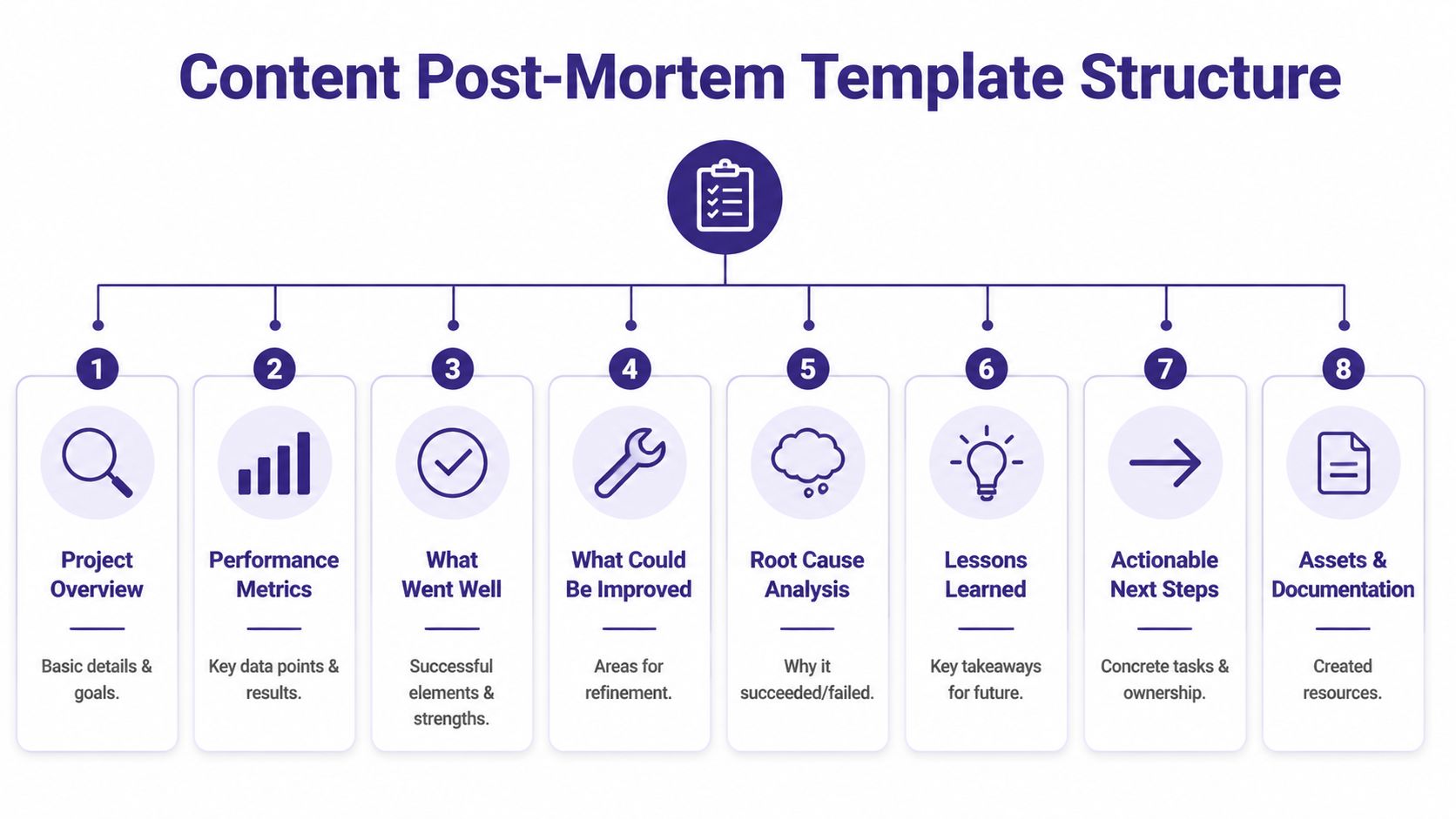

The Anatomy of a Content Post-Mortem Template

Most solid templates share the same backbone. A standard post-mortem template usually includes six core components: a timeline, root cause analysis, impact assessment, mitigation steps, documented learnings, and specific follow-up actions, as outlined in UptimeRobot’s guide to post-mortem templates. For content teams, the difference isn’t the structure. It’s what goes inside each field.

The eight sections worth keeping

I use eight sections because six is often too tight for creative work and ten is where teams start avoiding the doc.

| Section | What it captures in content teams | What to avoid |

|---|---|---|

| Project overview | What shipped, for whom, and what success was supposed to look like | Vague summaries like “episode underperformed” |

| Timeline | Key production, publishing, and promotion moments | Reconstructed guesses |

| Performance impact | What happened to reach, engagement, search, or conversions | Cherry-picking one metric |

| What went well | Elements worth repeating | Turning this into self-congratulation |

| What went wrong | Execution gaps, process misses, distribution failures | Blame language |

| Root cause analysis | Why the miss happened beneath the surface | Stopping at the first plausible answer |

| Lessons learned | Principles to carry forward | Generic advice nobody can act on |

| Action items | Owners, due dates, and next changes | “Be better next time” |

Project overview

Start with the facts that let anyone understand the piece without opening five different tabs.

Include the title, format, target audience, owner, publish date, campaign goal, and the core hypothesis. The hypothesis matters because content teams often judge outcomes against an unstated expectation. That makes discussions messy fast.

Useful prompts:

- What was the asset? Podcast episode, article, newsletter, short-form clip series, landing page, webinar replay.

- Who was it for? Existing subscribers, first-time discoverers, search traffic, sponsors, premium members.

- What did we expect it to do? Drive listens, rank for a query cluster, push trial signups, revive an older content bucket.

Timeline of production and promotion

The timeline is where weak opinions start losing ground.

List what happened in order. Include brief dates or timestamps for ideation, draft completion, editorial review, asset creation, upload, publication, email send, social posts, paid amplification, and any last-minute changes. In project reviews, rigorous timelines matter because they expose where assumptions replaced evidence and where delays entered the workflow, which is a point emphasized in Craft’s post-mortem analysis template overview.

For content, this often reveals patterns like these:

- The title changed late and nobody updated the thumbnail copy.

- The publish date moved but the email stayed on the original schedule.

- The short clips went out first and spoiled the core reveal.

- The article published before internal links were added, weakening discoverability.

The timeline should answer one question cleanly: what actually happened, in what order, and based on what evidence?

Performance impact

For content teams, translation is essential. In engineering, impact may mean downtime and recovery time. In creative operations, impact is audience and business consequence.

Your impact section should cover:

- Reach impact such as weak impressions, stalled subscriber response, or poor referral pickup

- Engagement impact like watch-time cliffs, low completion, weak saves, poor click-through, or negative comment themes

- Search impact such as poor rankings, low discovery, weak topical alignment

- Revenue or business impact if relevant, including missed sponsor value, failed launch support, or low lead quality

Keep the language factual. If you don’t have a metric, don’t fake precision. Write what you know.

A practical companion to this stage is a pre-work review using a content audit checklist. Many “content failures” turn out to be library and positioning problems that were visible before production started.

What went well and what could be improved

Teams skip this balance at their own risk.

If you only document what failed, people get defensive and the meeting quality drops. If you only celebrate what worked, the document turns into theater. You need both.

What went well might include a strong guest preparation process, fast editing turnaround, a strong opening segment, useful audience questions, or effective cross-team communication. What could be improved is the harder half. Be specific. “Promotion was weak” isn’t useful. “We relied on one newsletter placement and had no secondary distribution plan” is useful.

If your team already runs structured reflection rituals, borrowed formats like sprint retrospective templates can help shape this section, especially when you want to separate team-process issues from content-performance issues.

Root cause analysis

This is the heart of the post mortem document template.

Don’t write “topic didn’t resonate” unless you can support it. That’s often a summary, not a cause. Push deeper. Ask why the topic didn’t resonate. Was it a mismatch with subscriber expectations? Weak packaging? Poor timing? Thin angle differentiation? A format the audience historically ignores?

A simple chain might look like this:

- The video underperformed

- Because viewers clicked less than expected

- Because the thumbnail and title promised a different takeaway than the opening delivered

- Because packaging was finalized before the edit was locked

- Because the production workflow doesn’t require a final packaging review against the finished cut

That final line is usually where the useful action lives.

Lessons learned and action items

Lessons are not tasks. They’re operating principles.

Examples:

- Packaging should be reviewed against the final exported asset, not the rough cut.

- Guest selection needs an audience-fit check, not just a reputation check.

- Posts targeting search need internal-link planning before publication.

Action items are different. They need owners and dates. The standard best practice is simple and strict:

- One owner per action

- A due date

- A clear definition of done

- A place where the task will be tracked

Without those, the doc becomes a nice piece of writing and nothing more.

How to Run a Blameless Post-Mortem Meeting

A strong template can still produce a terrible meeting.

The usual failure mode is predictable. Someone opens analytics. Somebody else starts defending their department. The loudest theory takes over. Fifteen minutes later, the group has decided the platform changed, the market is saturated, and everyone should work harder next time.

That’s not analysis. That’s stress wearing a professional outfit.

Who should be in the room

Invite the people closest to the work and the result.

For most content reviews, that means a small group:

- Core creator or editor who shaped the piece

- Channel owner for YouTube, podcast, blog, newsletter, or social distribution

- Producer or project manager who can speak to timeline and workflow

- Growth or audience lead if promotion played a major role

- Decision-maker only if they can help remove blockers, not dominate the discussion

Don’t turn it into an all-hands performance trial. Small groups tell the truth faster.

A useful prep habit is to centralize the draft, comments, assets, and decision history before the meeting starts. That’s the difference between a real post-mortem and a memory contest. Teams trying to improve handoffs and review visibility usually benefit from a stronger collaboration workflow, which is why resources on project management collaboration matter here more than people expect.

Ground rules that actually work

State the rules out loud. It sounds basic because it is basic.

Use language like this:

- We’re reviewing systems and decisions, not personal worth

- We’ll separate facts from interpretations

- We’ll name contributors to failure, not hunt for a villain

- We won’t leave with vague advice

Then enforce it. If someone says, “The editor missed it,” reframe it. Ask what in the review process allowed the miss to survive.

A blameless meeting isn’t soft. It’s stricter than a blame meeting because it forces the team to explain the system, not just point at a person.

Use the Five Whys properly

The Five Whys technique works because it forces teams past easy answers. A structured methodology that includes Five Whys helps teams drill from surface symptoms to causes, and teams using consistent frameworks report faster pattern detection and a 15-20% reduction in recurring content failures, according to Pixelmatters’ guide to structuring incident post-mortems.

A content example:

Why did the video get low views?

Because click-through was weak.Why was click-through weak?

Because the thumbnail and title didn’t match the audience’s actual interest.Why didn’t they match?

Because the packaging emphasized a broad promise instead of the specific payoff in the video.Why did packaging drift from the video’s payoff?

Because packaging was drafted from the outline, not the final cut.Why was packaging drafted before the final cut?

Because the production process rewards speed to publish and has no final alignment checkpoint.

That final answer gives you a fix. “The algorithm changed” never does.

Keep the meeting moving

The facilitator matters more than the template.

Use a sequence like this:

- Start with timeline review so everyone agrees on what happened.

- Review impact briefly so the team understands why the discussion matters.

- Surface contributing factors before jumping to one root cause.

- Run Five Whys on the biggest factor

- Convert findings into action items before the room loses energy

A good facilitator also protects the room from two bad habits. First, over-explaining obvious facts. Second, jumping to solutions before the cause is clear.

Post-Mortem Examples for Different Content Failures

A creator ships what looks like a winner. The guest is recognizable. The article is polished. The video took two extra rounds to get right. Then the numbers come in flat, and the first explanation in Slack is usually too simple to be useful.

That is where examples help. Content teams rarely fail in the same way software teams do, and generic post-mortem advice misses that difference. A podcast can lose listeners in the first five minutes. An article can be well written and still die on arrival because the search intent was wrong. A video can hold attention until one specific segment, then drop off a cliff because the promise and the payoff drifted apart.

The template stays largely the same across those cases. The evidence changes. So does the language the team uses to diagnose what happened.

Example one, the podcast episode flop

A weekly business podcast lands a well-known founder and expects a strong episode. Downloads start decently. Completion drops early, replies are thin, and the episode does little for newsletter growth.

Here is what that post-mortem summary might look like:

| Field | Example entry |

|---|---|

| Project overview | Interview episode with a known founder, aimed at growth-stage operators |

| Intended outcome | Strong completion rate and newsletter signups from the episode page |

| What happened | Initial downloads were acceptable, but listeners exited early and response was muted |

| Primary issue | Guest selection favored reputation over audience relevance |

This is a common creative failure. Teams assume name recognition will carry the episode. It rarely does on its own.

The useful timeline is not “we published Tuesday and it underperformed.” It is “we booked fast because the guest had status, we shortened the pre-interview, we left the best material for later in the cut, and we promoted the guest’s credibility more than the listener payoff.” Once you lay that out, the miss becomes easier to fix.

Root cause notes

The first explanation might be “our audience didn’t care about this guest.” That still sits too close to the surface.

A better diagnosis looks like this:

- Booking criteria overweighted prestige

- The opening delayed the practical value

- Promo clips sold authority, not usefulness

- The team skipped comparison against prior high-retention episodes

That points to a process problem, not just a bad outcome.

Lessons and actions

The lesson is not to avoid big guests. The lesson is to screen for audience fit with the same rigor you use for perceived reach.

Useful follow-ups include:

- Add a guest-fit scorecard before booking is finalized

- Require the promised topic to appear in the opening segment

- Review planned episodes against prior high-retention formats

- Write captions and cutdowns around listener pain points, not guest prestige

Example two, the blog post that never ranked

This one stings because the draft is often strong.

A team publishes a long-form article around a priority keyword. The page is well designed. The writing is solid. A few weeks later, search traffic is weak, referral traffic is nearly nonexistent, and nobody can agree whether the problem was SEO, promotion, or the topic itself.

A filled-out summary might read like this:

| Field | Example entry |

|---|---|

| Project overview | Long-form article targeting a commercially important search topic |

| Intended outcome | Search visibility, assisted conversions, and stronger topic-cluster coverage |

| What happened | Low discovery from search and little distribution lift after publication |

| Primary issue | The article answered the topic well, but not in the way searchers wanted |

Content post-mortems necessitate more nuance than generic project reviews. “The post didn’t rank” is only the visible symptom. The underlying issue may sit in planning, packaging, internal linking, or promotion.

In practice, I see four recurring causes:

- The keyword looked promising during planning, but intent shifted or was misread.

- The draft answered adjacent questions rather than the exact one users typed.

- The page launched without enough internal links or distribution support.

- No one scheduled a refresh checkpoint, so the team treated publish day as the finish line.

Root cause notes

The shallow version is “SEO is competitive.”

The useful version is “we chose a query before validating the current SERP, then wrote for depth instead of fit.” That diagnosis gives the team something to change in the brief, review process, and publish checklist.

If the article also has repurposing potential, fold that into the review. A post that misses in search can still perform in another format if the core idea is strong. A documented content repurposing strategy for underperforming assets thus becomes operationally useful, not just editorially nice to have.

Lessons and actions

Strong action items here are specific:

- Add a final SERP and intent check before approving the outline

- Define internal-link targets in the brief

- Separate “rank for this query” from “build authority on this topic”

- Set a refresh review date at publish time

Teams that want a wider frame for recurring planning and execution mistakes can also benefit from understanding why projects fail. The patterns carry over cleanly to content work. Weak scoping, fuzzy ownership, and poor audience understanding usually show up long before the traffic report does.

Example three, the video with an engagement cliff

Video failures need their own example because the evidence is more granular.

A channel publishes a promising tutorial. Click-through is fine. Early views look healthy. Then audience retention collapses at the same point in the first third of the video.

A post-mortem summary could look like this:

| Field | Example entry |

|---|---|

| Project overview | Tutorial video built to attract new viewers and convert them into subscribers |

| Intended outcome | Strong average view duration, subscriber growth, and clip potential |

| What happened | Video attracted clicks but lost a large share of viewers at a specific segment |

| Primary issue | The structure delayed the promised payoff and inserted context viewers had not agreed to hear yet |

The mistake is usually structural, not technical. The title and thumbnail promise one thing. The opening minute spends too long setting up background, creator opinion, or scene-setting that matters to the team more than to the viewer.

Root cause notes

The retention graph gives you sharper evidence than a general performance summary. If the drop happens at one transition, one aside, or one long setup section, review that exact segment in context.

Contributing factors often include:

- An opening built for completeness rather than momentum

- A mismatch between packaging and first-minute delivery

- Too much context before the practical payoff

- No retention benchmark for similar videos in the same format

Lessons and actions

The fixes should be tied to the point of failure:

- Rewrite first-minute scripts to deliver the promise faster

- Review packaging against the final edit, not the outline

- Mark known retention-risk sections during edit review

- Compare retention curves across videos with the same format and audience intent

Example four, the content system incident

Some content failures are operational, but they still need a content-specific review.

A publisher migrates a large video library into a new system. The migration finishes on schedule. A day later, older articles across the archive show broken embeds and missing media on live pages.

That summary might look like this:

| Field | Example entry |

|---|---|

| Project overview | Migration of historical video assets to a new library and embed structure |

| Intended outcome | Better organization, reuse, and library searchability |

| What happened | Archived articles displayed broken embeds after migration |

| Primary issue | Migration planning covered assets, but missed publishing dependencies on live pages |

This type of failure sits between editorial operations and technical operations. That is exactly why content teams need their own post-mortem template. The review has to account for archive integrity, CMS behavior, reuse patterns, and ownership between content ops and editors.

Root cause notes

The migration itself may have worked as planned. The failure came from incomplete dependency mapping and narrow QA.

Common contributing factors:

- Archive checks covered assets, not live article behavior

- Ownership for post-migration QA was unclear

- Success criteria focused on transfer completion

- Rollback conditions were never defined

Lessons and actions

Good action items here are operational and concrete:

- Create a dependency checklist before future migrations

- Test a representative sample of live archive pages before signoff

- Assign one owner for archive QA

- Define rollback triggers before launch

What these examples have in common

Different formats produce different symptoms, but the pattern underneath is familiar. A weak podcast episode, a dead-on-arrival article, a video retention crash, and a broken media migration all reveal the same thing. The visible failure happened late. The actual mistake started earlier.

That is why a useful content post-mortem focuses on decisions, assumptions, and handoffs. It gives creative teams a way to examine flops without turning the review into a blame session or a vague discussion about “quality.” Done well, these documents improve briefs, packaging, editing, distribution, and production systems at the same time.

Turning Insights into Action and Assets

Most post-mortems fail at the exact moment they should start paying off.

The meeting happens. The notes are solid. Everyone nods at the right lessons. Then the document goes into a folder called “retrospectives” or “ops” or “content learnings,” and nobody opens it again until the same mistake reappears wearing a different shirt.

That’s not a documentation problem. It’s a follow-through problem. Data summarized in iLert’s postmortem template guide shows that 30-50% of action items from post-mortems are abandoned without proper tracking tools. The same source notes that 55% of video creators repeat mistakes on new projects because lessons from past flops weren’t systematically tracked and enforced.

Turn every action item into work, not wishes

The fix is simple, but it requires discipline.

After the meeting, convert every approved action into a task in the same system your team already uses for real work. Not a side doc. Not a “we should remember this” note. A real task with a real owner.

Use a short quality filter:

- Owner is singular. One person owns the next step.

- Outcome is visible. You can tell when the task is done.

- Deadline exists. Not “soon.”

- Scope is tight. If it’s too broad, split it.

Bad action item: improve episode promotion.

Good action item: create a pre-publish distribution checklist covering newsletter, short clips, cross-post copy, and fallback promotion channel.

Build a learning library, not a graveyard of docs

Here, professional creators separate from hobbyists.

A post-mortem shouldn’t only prevent recurrence. It should also improve future planning. That means storing lessons in a way the team can retrieve when planning the next episode, article, campaign, or archive refresh.

Useful categories include:

- Packaging lessons such as thumbnail-title alignment, opening-hook patterns, and headline misses

- Audience lessons like guest fit, format fatigue, content bucket performance, and comment themes

- Workflow lessons including approval delays, brief quality, asset handoff issues, and publishing friction

- Repurposing lessons covering what clipped well, what translated across platforms, and what failed outside the original format

If you care about turning historical output into future value, this is also where a stronger content repurposing strategy becomes practical instead of theoretical. Failed content still has usable parts. Strong post-mortems show you which parts deserve a second life and which assumptions need to be retired.

Hard truth: Teams rarely suffer from a lack of lessons. They suffer from a lack of retrieval.

A searchable knowledge base changes the quality of future decisions. Before a team greenlights a new guest, topic, or format, they should be able to find past post-mortems tied to similar choices. Before republishing archive content, they should know which themes historically underperformed and why.

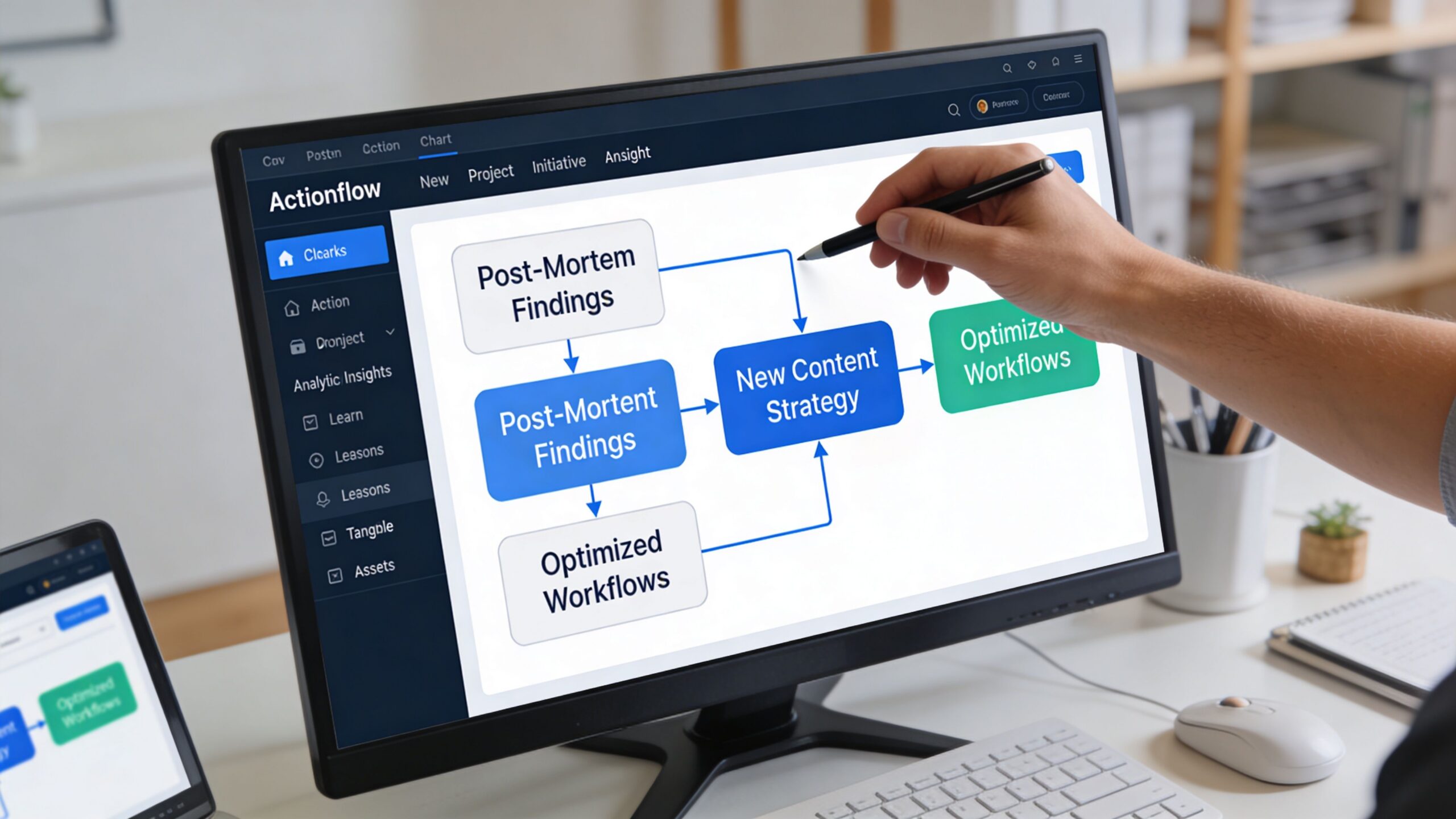

Here’s a useful visual explanation of how to think about turning process insight into a repeatable system:

What to save from every post-mortem

Don’t just save the document. Save the reusable fragments.

A mature team captures these assets from each review:

| Asset type | Why it matters |

|---|---|

| Decision summary | Helps leaders revisit the core finding fast |

| Root cause tag | Makes pattern-finding easier across multiple post-mortems |

| Action status | Shows whether the lesson changed behavior |

| Reusable checklist update | Converts one mistake into prevention for future work |

| Content pattern note | Preserves audience or format insight for planning |

This is how a post mortem document template stops being a compliance exercise and becomes an engine for content quality. Every review sharpens the next brief. Every failure contributes to a stronger archive of operational judgment. Every miss makes the library smarter, if the team captures it properly.

Fail Forward Your New Growth Strategy

Content teams don’t grow by avoiding misses. They grow by getting more value out of them.

A good post mortem document template gives you a repeatable way to inspect what happened, separate facts from feelings, and improve the system behind the work. A blameless meeting keeps the analysis honest. Real examples make the process usable. Follow-through turns the document from reflection into improvement.

That’s the difference between publishing a lot and building a content operation.

When you review flops properly, you stop treating bad performance as random pain. It becomes research. You learn which formats deserve another shot, which packaging choices keep failing, which workflows introduce risk, and which assets can be repurposed instead of abandoned.

That shift compounds. Teams become less reactive, more precise, and much harder to derail after one disappointing launch.

If you want to turn old content, failed experiments, and buried team knowledge into something searchable and useful, Contesimal helps you organize your library, surface patterns across past work, and turn archived content into new creative and business value.